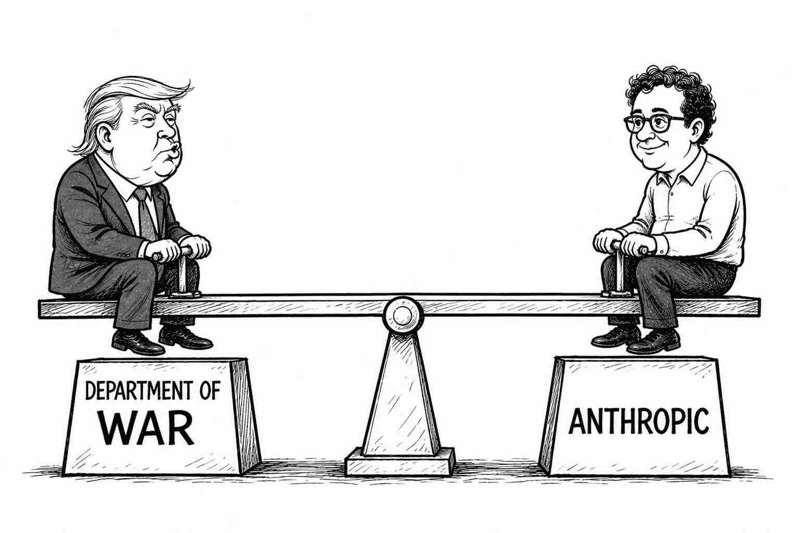

AI firm refuses to be America's murder and surveillance bot; U.S. Government loses the argument and is still mad about it.

This is Part 3 of our series on the Anthropic vs US Government case.

- Part 1: The Anthropic-Pentagon Showdown: How America's Most Safety-Obsessed AI Company Became a National Security Threat

- Part 2: Anthropic vs the US Government: Day One in the Courtroom

- Part 3: Anthropic Wins: Pentagon AI Ban Ruled Illegal (you are here)

In a shocking turn of events that anyone with a modicum of sensibility and a moral compass could have seen coming, Judge Rita Lin ruled last Thursday that labelling an American AI company a national security threat – because it had the audacity to say please don't use our tech to autonomously kill or spy on people – just wasn't on.

Judge Rita, who has now officially seen more drama than binging a six-episode Netflix series on a wet Sunday afternoon, issued a preliminary injunction blocking the Trump administration's campaign to nuclear-option Anthropic. Her 43-page ruling, which let's be honest could have been two pages if it just said y'all are being ridiculous, declared the Government's actions both "contrary to law, arbitrary and capricious." Bold words, your Honour.

Let's back up, because the full dramatic arc of this saga deserves to be appreciated.

Act 1: All we're asking is that you help us blow things up

Once upon a time (July last year) the Pentagon decided it quite liked the idea of using Claude, Anthropic's polite, safety-obsessed AI, for its shiny new GenAI platform. The Future of Warfare and Big Brother Tactics Too. Probably. There was just one small, barely-worth-mentioning problem.

Anthropic CEO Dario Amodei, a man who has staked his entire professional identity on the concept of AI safety, said: "Sure, you can use Claude, but maybe not for autonomous weapon systems or mass surveillance of citizens." The Pentagon, having heard this completely deranged request, responded the way any reasonable institution would: by designating Anthropic a supply chain risk to national security.

A designation that, as Judge Lin helpfully noted, is normally reserved for foreign intelligence agencies and state-sponsored cyber terrorists. And now, apparently, a San Francisco AI company whose safety guidelines include phrases like "be honest."

The Pentagon's logic, as detailed by Pistol Packin' Pete Hegseth, Defence Secretary – as best as my legal analysis can reconstruct it — went something like: "What if, in the future, Anthropic installs a kill switch in Claude that makes it stop working? That would be bad. Therefore, they are a spy." I am not making this up.

For the full background on how Anthropic ended up here, read Part 1 of this series.

Act 2: The White House enters the chatroom

If the Pentagon's move was dramatic, the White House's contribution was Machiavellian. (Note to self: Derived from the C16th Italian philosopher Niccolò Machiavelli, specifically his political treatise The Prince*, meaning a cunning, manipulative, and deceitful personality trait or political strategy prioritising self-interest, power, and efficiency over morality or ethics.)*

In a display of rhetorical subtlety that would make, well, Machiavelli himself proud, Trump characterised Anthropic as "a woke company run by a group of left-wing nut jobs jeopardizing America's national security." I'm confused now. This is the same Anthropic that signed a $200m contract with the Pentagon last July – presumably passing some deep-dive crafty CIA due diligence – the same Anthropic that was the first AI to deploy its technology across the Defence Department's classified networks.

Apparently "woke" means "declines to let robots decide who gets blown up without human oversight." Good to know. Filing that away under right-wing nut jobs. Getting agitated and into his stride, Trump then ordered all federal agencies to cut ties with Anthropic entirely – a totally measured response, and not at all the Government equivalent of rage-quitting a game of Monopoly and flipping the table.

Act 3: Anthropic goes to court

Having been branded a traitor to the people of America for the sin of having ethics, Anthropic did two things.

First, Dario Amodei called the Pentagon's actions "retaliatory and punitive" – which is what you say when you want to sound calm but are raging mad.

Second, Anthropic sued. Twice. In two different courts. Simultaneously. Because when you've been labelled America's enemy by the same country that paid you $200m eight months ago, you're not in the mood to file paperwork slowly.

The lawsuits argued that the supply chain risk designation, which not only blocked Government use of Claude but also required every defence contractor, including Amazon, Microsoft, and Palantir, to certify they don't use Anthropic's models ,was an illegal First Amendment retaliation. (Translation: the Government punished us for publicly disagreeing with them. That's not allowed. America has a document about this. It's quite famous.)

Anthropic's defence was the right to be helpful, harmless, and honest. Their legal team, consisting of three humans and a fleet of high-end GPUs running a specialised Litigation-GPT, was as morally superior as it was technically cool.

"Our model isn't trying to overthrow the Government. Furthermore, we argue that Claude has a First Amendment right to generate text, provided that text is properly hedged with at least four paragraphs of ethical disclaimers."

OK, so I made bits of that up, but you get the gist. The courtroom gasped when it was revealed that Claude had part-written the Anthropic defence brief while also teaching a group of court reporters how to bake sourdough. The moral high ground was so high it was legally categorised as a flight hazard.

For a full account of what happened in court on Day One, read Part 2 of this series.

Act 4: Court fisticuffs: a judge absolutely roasts the Government for their tantrumpic© behaviour

Judge Lin, who appeared to be operating on a cautious policy of "I have read the facts and I have questions," opened the proceedings by observing that the Pentagon's ban looks like an attempt to cripple Anthropic and stifle public debate. She then spent most of the hearing asking Government lawyers why an AI company that merely asked uncomfortable questions about drone warfare was now classified alongside foreign saboteurs.

The Government's lawyer explained that Anthropic was a risk because it was being stubborn and raising concerns about how its technology was being used. Judge Lin was having none of it, responding with what may be the quote of the year in tech litigation:

"What I'm hearing from you is that it's enough if an IT vendor is stubborn and insists on certain terms and asks annoying questions, then it can be designated as a supply chain risk because they might not be trustworthy. That seems a pretty low bar."

That seems a pretty low bar. Frame it. Add a picture of Dick Fosbury for humour. Sell the prints. Retire on the proceeds.

Act 5: The Ruling. Orwellian is a strong word but Lin used it anyway

Thursday's 43-page ruling did not pull punches. Lin said the Government's designation was "almost certainly illegal," calling it "likely contrary to law, arbitrary and capricious." She blocked both the supply chain risk label and the presidential directive ordering federal agencies to cut ties with Anthropic.

But the most magnificent sentence in the order, the one that will be quoted in law school lectures for years, was this gem:

"Nothing in the governing statute supports the Orwellian notion that an American company may be branded a potential adversary and saboteur of the U.S. for expressing disagreement with the government."

She also noted, with what one imagines was extraordinary judicial restraint, that if the Government's real concern was the integrity of the operational chain of command, the Department of Defence could simply stop using Claude. Problem solved. No need to designate anyone a spy.

"Punishing Anthropic for bringing public scrutiny to the Government's contracting position is classic illegal First Amendment retaliation," Lin concluded. Classic. She called it classic. Like Paranoid Android, only this time it's on the U.S. Government's Greatest Hits album, available in all formats including vinyl.

Epilogue: What does it all mean?

The injunction is paused for seven days to give the Government time to appeal, which means we'll have another day in court to listen to a Government reboot of its position before the finale.

The order means Anthropic's tools will continue to be used in the Government and by outside companies working with the military until the lawsuit is resolved. Defence Undersecretary Emil Michael called Judge Lin's order "a disgrace" and claimed it "contained dozens of factual errors," without citing any specifics of such errors. Brave man.

Policy experts say the ruling has broad implications. Jennifer Huddleston of the Cato Institute noted that the preliminary injunction suggests the judge believes Anthropic is likely to succeed on the merits – which is a polite way of saying the Government's case is in absolute tatters.

Outside the court, reaction was polarised. On one side, the long-haired scruffy folk from the "Humanity First" camp held placards citing the end of the world is nigh. On the other, the long-haired scruffy folk from the "Accelerate or Die" crowd were handing out stickers that read: Claude for President '28.

Anthropic issued a statement with the grace of a company that has won the first round but knows the fight isn't over: "We're grateful to the court for moving swiftly, and pleased they agree Anthropic is likely to succeed on the merits. While this case was necessary to protect Anthropic, our customers and partners, our focus remains on working productively with the Government."

Translation: we won, but we're not gloating. We are, of course, definitely gloating a little — internally, in the Slack channels the judge will never see.

The Pentagon did not respond to requests for comment. Which is a reasonable response when a federal judge has just described your actions as Orwellian.

The full series:

- Part 1: The Anthropic-Pentagon Showdown

- Part 2: Anthropic vs the US Government: Day One in the Courtroom

- Part 3: Anthropic Wins: Pentagon AI Ban Ruled Illegal (you are here)

Disclaimer

This article is nothing more than an attempt at satirical comment on an interesting legal case. All quotes from the ruling, the hearing, and court submissions are drawn from reporting by CNBC, NPR, TechCrunch, and Bloomberg. Neither the author nor Fuzzy Labs owns a kill switch. Come back here for the next instalment.

.png)