.png)

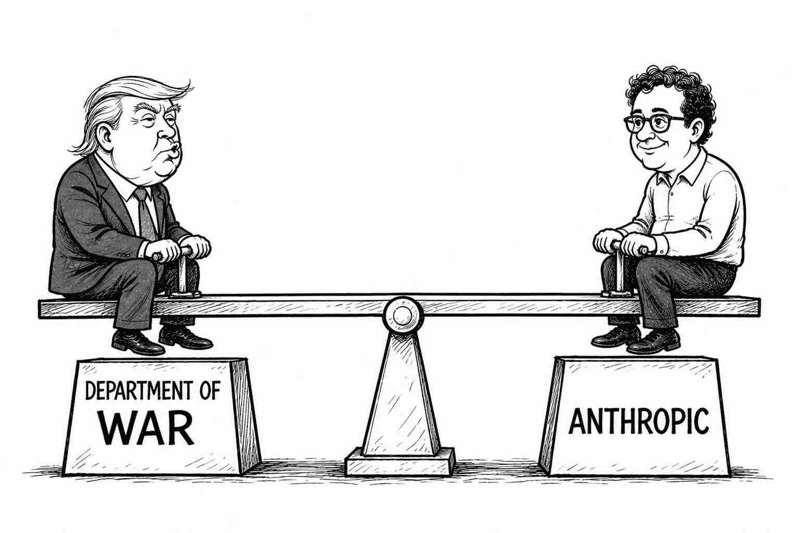

Few disputes in recent memory have exposed the fault lines between a tech business's ethics and values and a government’s national security imperatives as sharply as the ongoing clash between Anthropic and the U.S. Department of Defence, also now known as the Department of War. What began as a contract negotiation has escalated into a full-on legal battle playing out in the public domain, a presidential directive, and a broader reckoning over who gets to set the rules on how AI is used in military conflict.

How it started: A $200m partnership

Founded in 2021 by seven former employees of OpenAI, including siblings Daniela Amodei and Dario Amodei, Anthropic badges itself as an AI safety company. It signed a two-year, $200m contract with the U.S. Department of Defence in July 2025, to help prototype frontier AI capabilities that advance U.S. national security.

Anthropic has developed the Claude family of LLMs, researching and developing AI to study their safety properties at the technological frontier, using this research to deploy safe models for the public. Claude became the first AI model approved to operate on classified military networks.

CEO Dario Amodei has consistently struck a hawkish-but-cautious tone. In January he said democracies have a legitimate interest in some AI-powered military and geopolitical tools, arguing that we should arm democracies with AI, but we should do so carefully and within limits. Those limits, it turned out, would become the crux of an extraordinary rupture.

The trigger: Venezuela and guardrails

After the U.S. raid on Venezuela in early January that captured dictator Nicolás Maduro, Anthropic asked Palantir if its AI was used in the operation. They described their inquiry as routine due diligence consistent with a safety-focused company wanting to understand how its models are being deployed.

This ruffled the feathers of the Pentagon and Palantir, who interpreted the request very differently, with a sudden realisation: if Anthropic’s software had gone down or some guardrail had triggered a refusal during an operation, American troops could have been at risk. Defence Secretary Pete Hegseth described this as a whoa moment at the Pentagon.

This crystallised a simmering concern that Anthropic, a private company with its own ethical principles baked into its products, could at any moment limit, modify, or withdraw capabilities during a live military operation. For the U.S. Military, whose doctrine is built on reliability and redundancy, that was an unacceptable vulnerability.

The ultimatum and stand-off

On February 24, Hegseth gave Dario Amodei a deadline: relent by 5:01pm February 27 and allow unrestricted use of the company’s AI models for all legal purposes. The core sticking points were two issues that Anthropic treated as absolute red lines:

- the use of its AI for autonomous weapons systems that could make lethal decisions without meaningful human authorisation;

- the deployment of its technology for mass domestic surveillance of American citizens.

The core tension is rooted in a broader clash over the future use of Anthropic’s systems. Hegseth had emphasised his desire to use all available AI systems for any purpose allowed by law, while Anthropic wanted to maintain its own guardrails. The Pentagon’s position was that it was asking for nothing more than what the law already permitted, and that it had no intention of using AI for mass surveillance or fully autonomous killing machines. Anthropic was not willing to hand over that discretion.

Anthropic released a statement on February 26 indicating it would not budge. The following day, President Trump directed via Presidential Order that all federal agencies immediately cease using Anthropic’s systems, and Hegseth designated them a supply chain risk.

The fallout

The Presidential Order, delivered Trump style via social media rather than formal regulatory channels, sent federal agencies scrambling. Nearly three weeks passed before formal guidance was issued on how to proceed. The disruption went well beyond bureaucratic inconvenience.

Tasks previously handled by Claude, such as querying large datasets were now being done manually with tools such as Microsoft Excel. Technologists at the Department of Defence hated the decision because they had just got operators comfortable using AI. Some staff were slow rolling the replacement of Claude because they were actively using it to build workflows. One CIO told reporters it planned to delay the phase-out, betting that a deal would be struck before the deadline expired.

The Legal battle

On March 9, Anthropic escalated the dispute into formal court proceedings, arguing the administration’s actions had caused it irreparable harm and requesting an injunction of the supply chain risk designation. They argued it violated its First Amendment rights by punishing it for publicly stating its ethics.

The Pentagon responded with a 40-page court filing laying out its national security case, expressing concern that Anthropic might attempt to disable its technology or preemptively alter the behaviour of its model during critical military engagements if the company believed its ethical boundaries were being violated.

Anthropic’s court filings punched back hard. Head of Policy, Sarah Heck, who was present at the February 24 meeting between Amodei and Hegseth, filed a sworn declaration calling out what she described as a central falsehood in the government’s filings: that Anthropic had demanded an approval role over military operations. At no time during Anthropic’s negotiations with the Department did I or any Anthropic employee state that the company wanted that kind of role, she said.

Perhaps most damaging was a timeline Heck laid out in her declaration. On March 4, the day after the Pentagon issued its supply-chain risk designation, Under Secretary Michael emailed Amodei to say the two sides were very close on the two issues the Government now cites as evidence that Anthropic is a national security threat.

The contradiction was striking: if Anthropic’s stance on those issues made it a genuine security threat, why was a senior Pentagon official saying the two sides were nearly in agreement the very day after the designation was finalised?

The industry rallies

The tech industry has watched these proceedings with considerable anxiety, and many have moved beyond quiet concern to active opposition. Major tech industry groups representing companies with Pentagon contracts filed an amicus brief, calling for a pause on the designation.

Microsoft filed a separate court brief supporting Anthropic. Google and Amazon, both investors in Anthropic, stated their customers could continue using Anthropic’s technology for non-defence work. Even OpenAI CEO Sam Altman, who stands to benefit commercially if Anthropic is frozen out of government work, reportedly said he disagreed with the Pentagon’s designation.

What it all means

A hearing on whether to grant Anthropic a preliminary injunction is set for March 24 before Judge Rita Lin in San Francisco.

This dispute is not simply a contract squabble or a culture-war skirmish, rather it is a test case that will define the relationship between AI companies and governments for the foreseeable future. The fall-out highlights the clash of cultures between establishment and Tech. It intensified an alarming trend: President Trump conveyed ambivalence regarding the rapid development of AI when in his Presidential Order he walloped the leftwing nut jobs of Anthropic.

The spat is not good for the American government. Anthropic was the only AI lab whose models had been cleared for use on classified military data until late February, when the Pentagon gave xAI similar authorisation. xAI’s LLM, Grok, is widely considered buggier and less reliable. Worse, the juxtaposition is clear: if the U.S. Government's paramount goal is to preserve and extend America’s lead in AI, then trying to squash one of the country’s most successful AI firms seems myopic and hugely counterproductive.

Both sides are posturing to a degree. The government’s fury appears to be driven by outrage at being told ‘No’, whilst the egos of tech leaders may be creating an ideological as weaponry because they can. Maybe Anthropic should build some feature safeguards in their models to prevent such uses, dispensing with any need for further legal guarantees?

The row has boosted Anthropic’s reputation for probity - within a week of earning Trump’s ire, Claude became the most downloaded free app in America from the App store. AI leaders fret about a ‘Chernobyl moment’, in which AI triggers a catastrophe, a cybersecurity disaster, and qualms about the harm AI might do to civil liberties are real, yet staff at OpenAI and Google have signed a public letter urging the leadership at both companies to support Anthropic.

The irony is considerable. Within the industry, Anthropic is widely considered to be the most serious and proactive about policing threats from cyber-attacks, yet it is Anthropic, not its less safety-focused competitors, that now finds itself labeled an unacceptable security risk. The outcome of the March 24 hearing will be the first significant legal test of this uncharted territory.